Intelligence Without Governance

There is still much wrong in the debate about AI and jobs

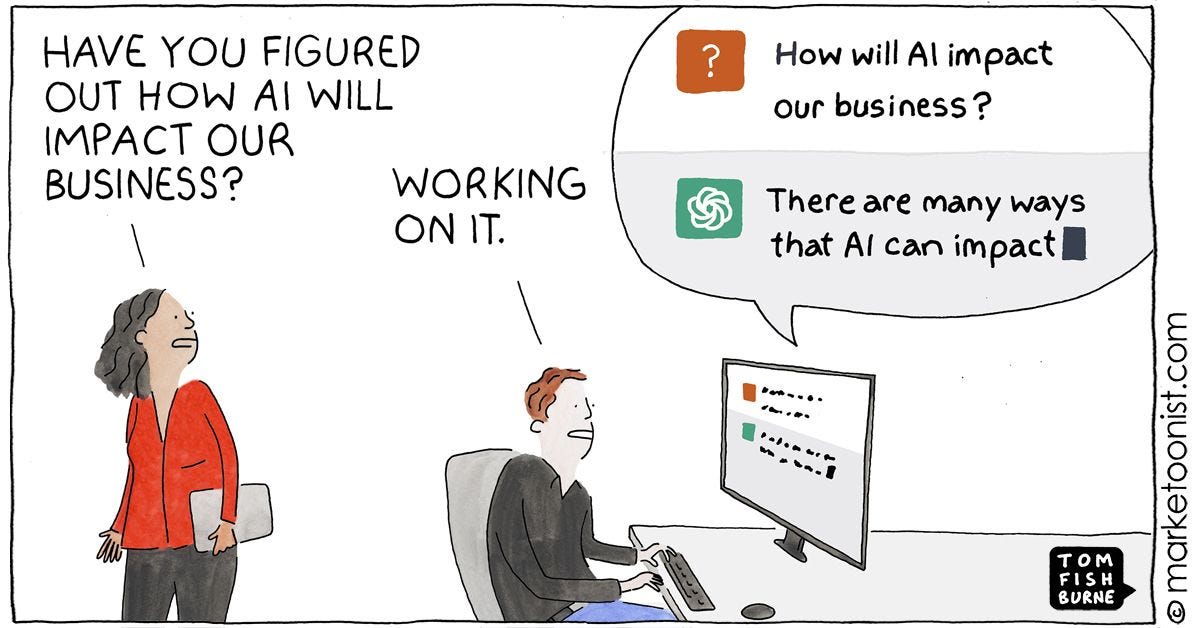

If you are someone that buys a treadmill in January and leaves laundry on it by March then maybe, just maybe AI will take your job.

AI Push Back

I think one of the strangest scenes in modern corporate life is the sight of very intelligent adults speaking about artificial intelligence in the tone earlier centuries reserved for saints, oracles, and unusually gifted horses. Why so much reverence? I was at a panel discussion last week and it is frightening how executives use buzzwords around AI. I sense this is for investors’ ears and nowhere near reality.

The machine, they tell us, will draft the memo, summarize the meeting, prepare the analysis, write the code, recommend the strategy. It is always about to save us from some exhausting portion of ourselves. One listens for long enough and begins to suspect that what many organizations really want is not intelligence, but relief. I think we need to push back against this.

AI elevates capability. It does not eliminate it

I do not say this lightly. Anyone who has spent years inside institutions knows how much human labor is spent not on thought but on the staging of thought. We produce documents to prove that we have considered a problem, presentations to prove that we have discussed it, process notes to prove that we have governed it, and emails to prove that no one can later say they were not copied at 18:43 on a Thursday evening. Into this long comedy of administrative self-protection arrives a machine that can generate all the signs of diligence in seconds. It is no wonder executives feel a little weak at the knees.

Responsibility

But I think the seduction begins in the wrong place. The important fact about AI is not that it can write. The important fact is that it cannot answer for what it writes. It can prepare the analysis, draft the recommendation, construct the options in beautiful prose, even imitate the tones of caution or conviction that human beings mistake for wisdom. But it will not sign the paper. It will not sit in front of a regulator. It will not explain itself to a customer whose mortgage was mishandled, an employee whose job was redesigned into absurdity, or a board suddenly eager to discover who approved the AI. The machine can produce the sentence. It cannot bear the consequence.

That, to me, is the real beginning of the story. Not substitution, but exposure. Not the disappearance of work, but the discovery of what work actually is. The question is not whether AI can produce content. Of course it can, when prompted. The question is whether organizations are prepared to redesign responsibility. Most are not. They want the efficiencies of machine generation without the inconvenience of institutional self-examination. They want speed without submission to discipline. They want intelligence without governance. In corporate life, this is a familiar impulse. It is the same impulse that buys a treadmill in January and leaves laundry on it by March.

Overflow

I believe the real drama is not technological but organizational. The firms that benefit most from AI will not be the ones with the loudest announcements, the slickest demos, or the most feverish executives posting photographs from “AI transformation summits.” They will be the ones willing to perform the far less glamorous labor of clarifying ownership, cleaning processes, documenting exceptions, preserving institutional memory, and deciding, in plain language, who is accountable for what. This does not sound romantic. Neither did drainage systems. Yet cities depend on them. Water is useful only when the pipes can carry it where it needs to go. Otherwise it floods the street, backs into the cellar, and leaves everyone standing in the wreckage asking why abundance has become damage. AI poses much the same challenge. It can produce a flood of analysis, drafts, recommendations, and code. But if the underlying processes are broken, if ownership is vague, if no one knows where intervention rights live, then all that apparent intelligence does not create value. It creates overflow.

Legacy

The AI and jobs debate cannot be found in a slick demo or social media threads. The demo looks like a miracle: fast, cheap, elegant. But walk into any actual firm and you are not in a tech showcase. You are in a museum of bad decisions and legacy systems. Old systems still talk to older systems. Documentation is partial, stale, or mythical. Whole processes survive because a few people in operations know all the unofficial fixes and keep things moving when the formal system fails. A workflow introduced as a temporary patch in 2017 is still there because no one has had the time, clarity, or authority to replace it.

This is why so many predictions about the immediate obsolescence of outsourced coders have sounded more prophetic than true. Yes, in a clean environment, with clear architecture and good documentation, AI can produce startling gains. But most large organizations are not clean environments. They are historical settlements. They contain ruins, extensions, secret passages, and little shrines to earlier management fads. Their software is not merely technical. It is biographical. It records mergers, panics, regulatory scares, budget freezes, departmental rivalries, and those immortal words of corporate postponement: we will sort it out properly later.

Later, of course, arrives wearing a lanyard and carrying an AI strategy deck.

So the problem is never simply whether an AI model can generate code or draft a tool. The problem is whether the organization understands enough about itself to know what problem it is solving. Getting the most out of AI requires context, not just computational power. One must understand the surrounding architecture, the dependencies, the hidden liabilities, the exceptions, the habits of users, the workarounds people invented because the official process did not survive first contact with reality. When that context is missing, AI does not abolish labor. It reveals how much invisible labor was already holding the place together.

I think this matters beyond software. It tells us something useful about how organizations create value. Some roles genuinely do the hard work of coordination: they frame decisions, surface trade-offs, resolve conflicts, protect standards, and carry responsibility across functions that would otherwise drift apart. Those roles matter, and may matter even more in an AI-rich world. But there are also roles, and fragments of roles, built largely around repetition, translation, and administrative relay. AI is likely to compress those first. The distinction is not between managers and non-managers, or between leaders and workers. It is between work that sharpens judgment and work that merely recirculates it.

That is why the shock feels personal as well as organizational. AI can now perform, in seconds, many of the gestures that once signaled usefulness: the recap, the options paper, the strategic summary, the smooth rearrangement of anxiety into bullet points. And so institutions are forced to ask a sharper question. Which roles are creating real clarity, accountability, and decision quality, and which are mainly moving information from one room to another? The answer cannot merely be that the human being adds warmth around the edges. The answer has to be responsibility. It has to be the capacity to decide, to absorb consequence, and to know when a recommendation is unwise even when it is elegant.

It isn't just about the software. There is a deep, almost childish vanity in how modern institutions approach their tools. They assume that because a machine can generate an answer, the organization has become the kind of place that can use that answer wisely. These are not the same achievement. A recommendation, however polished, is not a decision process. A draft is not a governance model. Analysis is not accountability. The machine may tell you what appears efficient; it cannot tell you what is prudent, legitimate, humane, or bearable to the people who must live inside the result.

Judgment

This is why I believe the future of work will be less about replacement than about a redistribution of dignity and discomfort. Some tasks will become easier, cheaper, faster. That much is obvious. What is less often said, because it is less flattering, is that the burden removed from execution will reappear as pressure on judgment. If the draft can be produced instantly, what remains valuable is the person willing to say yes, no, not yet, or absolutely not under any circumstances.

For years, many professional roles have been protected by fog. One could survive, even flourish, by converting uncertainty into procedure and procedure into paperwork. Attend the meeting. Request the deck. Refine the wording. Summarize the options. Delay the conclusion. Escalate politely. Ask for alignment. Propose a working group. There are entire careers built on the skilled circulation of not quite deciding. AI is going to be a nightmare for these people. It is devastatingly good at faking productivity. It can churn out a deck in better prose than a VP, summarize the indecision with total confidence, and phrase a “no-update” email with unnerving calm. In a lazy institution, this will not feel like transformation. It will feel like bureaucracy on amphetamines.

And yet there is something clarifying in that prospect. When the rituals of competence become cheap, one begins to see more clearly what actual contribution looks like. Not polish. Not activity. Not the magnificent modern talent for generating artifacts. Contribution begins to look like ownership. It begins to look like someone who knows where the process starts, where it breaks, which exception matters, who absorbs the risk, and which decision cannot be delegated to an AI model no matter how persuasive its syntax may be.

Custody

This is why human oversight remains essential, and not in the pious, ceremonial sense that appears in policy documents. I do not mean the kind of oversight that consists of adding a sentence to the governance pack declaring that “a human remains in the loop,” as though the loop were a mystical object one could stand near while checking email. In many organizations, that phrase has become less a safeguard than a fresh instrument of administrative self-protection. It sounds like accountability while diffusing it. It suggests that somewhere, somehow, a responsible person exists, while carefully avoiding the far more dangerous task of naming who that person is, what authority they possess, and what they are required to do when the system goes wrong. I mean actual oversight.

I keep coming back to the apparently dull subjects that turn out not to be dull at all: process inventories, controls, ownership, monitoring, intervention rights, human oversight that is more than ceremonial. These phrases suffer from a public relations problem. They sound like the natural enemies of inspiration. But I think the opposite is true. Civilization depends on people who know exactly where responsibility lives.

We have spent too long praising disruption and too little time admiring custody.

Any serious use of AI requires someone to know where a process begins, where it can fail, who has authority to stop it, and what happens when the machine’s recommendation collides with law, ethics, customer reality, or ordinary human decency. This is what human oversight ought to mean. Not a decorative statement that a person remains “in the loop,” as though one could stand vaguely near the loop and still claim moral credit. Oversight means a named human being, with enough context and enough courage, prepared to interrupt the machine and answer for the interruption.

Perhaps that is why the deeper significance of AI feels, to me, almost political. We are told endlessly that the technology is intelligent. Fine. The more interesting question is whether the institution using it is adult. Does it know itself? Does it remember its own history? Can it distinguish efficiency from wisdom, throughput from legitimacy, recommendation from judgment? Tools do not answer these questions. They sharpen them.

The companies that thrive in this new world will not ask, first, “What can the model do?” They will ask, “What process are we trying to improve, what decision are we trying to support, who owns it, where are the failure points, and what institutional conditions would make the output usable?” This is a much less glamorous sequence of questions. It has the disadvantage of being serious. Yet seriousness, in periods of technological intoxication, is often the rarest competitive advantage.

Incentives

I am reminded of older moments when a new machine seemed poised to change everything. The rotary press mattered. The telegraph mattered. The assembly line mattered. The spreadsheet mattered. But in each case the machine alone did not determine the outcome. What mattered was the discipline of the institution around it, the habits it rewarded, the forms of authority it strengthened, the forms of stupidity it accelerated. Technology does not enter a vacuum. It enters a culture, and culture is where many revolutions go to die.

That is the danger now. Not that AI will suddenly eliminate human beings in one clean act of substitution, but that leaders will use its brilliance as a reason not to reform themselves. They will automate drafting while leaving ownership vague. They will accelerate workflows whose purpose was never quite clear. They will ask an AI model for recommendations in organizations still terrified of responsibility. They will discover, a little too late, that a faster confused institution is still confused.

More With More

That is why Jensen Huang’s recent remark about layoffs struck me as unusually direct. Asked why companies are firing people if AI is supposed to make everyone more productive, he answered, in substance, that imaginative companies will do more with more, while leaders who are out of ideas will use new capability merely as a pretext to do less. I think that is exactly right. AI does not automatically create ambition. It reveals whether ambition was there in the first place. In one company, greater capability becomes an argument for building more, serving more, attempting more. In another, it becomes an alibi for shrinking imagination and calling the retreat efficiency.

Jensen Huang frequently describes the modern data center as an “AI Factory,” a physical plant designed to produce a new commodity: tokens. In his view, compute is the raw fuel of the new economy, and for imaginative companies, this abundance allows them to “do more with more.” It is a compelling architectural vision of scale.

But as any operator knows, the more a factory produces, the more critical the role of the foreman becomes. Huang is building the infrastructure that generates the shipment; I am asking who is going to be the person who actually signs for it. Who is the foreman willing to take the blame when the shipment is wrong, when the logic is flawed, or when the “intelligence” produced by the factory ignores the “context” of the town it sits in?

If we follow the factory logic to its end, we realize that tokens are cheap, but the signature is expensive. The “more” that imaginative companies will do is not just more production; it will be more frequent and more difficult acts of discernment. They will use the factory to generate the options, but they will rely on a named human being to survive the result.

I also think there is a harder and more hopeful possibility. AI may force a confrontation with what serious work has always been. Not mere production. Not the pile of emails, the dense memo, the fevered performance of indispensability. Serious work is the assumption of consequence. It is the willingness to decide under conditions of uncertainty and to remain visible after the decision has been made.

For a long time, much of professional life has depended on the theater of effort. The full calendar. The polished deck. The midnight revision. The rhetoric of overload. AI is about to expose how much of this was costume. If a machine can generate in seconds what once occupied a team for a day, then the old symbols of diligence begin to look less like proof of merit than like relics from a regime of expensive inefficiency.

This will not be comfortable, especially for institutions that have long confused visible effort with actual value.

Because what rises in place of that theater may be something better. If execution becomes cheaper, discernment becomes more precious. If drafting becomes instant, clarity becomes rarer. If recommendations multiply, accountability becomes the scarce good. The machine, in other words, may return us to an older truth: that the center of work was never typing. It was judgment.

Cultural Intelligence

There is even, I think, a bleak little joke at the heart of all this. For years, organizations behaved as though intelligence were the rare treasure. Now they possess machines that can simulate immense reserves of it on command, and they discover that the truly scarce things were responsibility, courage, institutional memory, and the willingness to say: this decision is mine.

The answer, inconveniently, is still a person.

That is why the real transformation will happen where machine capability meets human ownership. Not where the software is most dazzling, but where the institution is most serious. AI will matter most in places mature enough to pair technical power with moral and organizational responsibility. Elsewhere it will remain what so many management fashions become in the end: an expensive new instrument for performing old evasions.

I do not believe, at least not in the short term, that AI will replace people in the grand theatrical manner so often advertised. There will be disruption, certainly, especially in fields built around routine content, routine service, and procedural output. But I believe AI will do something more unsettling. It will reveal what people were actually for. It will expose whether an organization knows its own processes, understands its own context, and possesses the nerve to act on better information without surrendering judgment.

The machine can prepare the case. The human being must still live with the verdict. That is not a footnote to the future of work. It is the whole argument.

Decide wisely

Colin

Image by Nathan Lemon on Unsplash

My favorite quotation is: "The important fact about AI is not that it can write. The important fact is that it cannot answer for what it writes." Human agency supplies the part that Ai doesn't have and never will have - the spirit. A human being is more than a body. Ai, IMHO – no matter how advanced it gets in time – will never be more than a tool, a machine. It may get smart enough to start misbehaving and causing trouble, but it will remain a machine. I may be seriously wrong, but I don't believe people were endowed with the ability to "breathe a spirit" into a machine and make it a living being. Which, I realize, may not be the point of your essay. I am just reflecting.

Compelling argument about governance being the bottleneck, but I wonder if you're being too generous to the pre-AI status quo. You described organizations as "historical settlements" full of legacy chaos... but isn't that precisely the environment where human judgment was already failing quietly?

If the named human being who is supposed to sign for the decision was already hiding behind procedure and fog before AI arrived, why should we trust that same institutional culture to suddenly produce courageous, accountable people just because the stakes are clearer now?

Isn't it possible that AI doesn't just reveal the absence of governance? It reveals that real governance was always rarer than we pretended, and that the "theater of effort" described was itself a form of institutional self-deception we were all silently complicit in?

I wonder what would it actually take to build that culture of ownership from scratch, rather than assuming it exists somewhere waiting to be activated?