Decisions Without Reasons

How Control Replaced Contemplation

This post builds on my last post about The Skynet Fallacy which caused much discussion in the comment section.

I wrote a little while ago that young people should study philosophy (I also think chemistry or physics), a new paper states “we looked at test and survey data from over 600,000 students. Our analysis found that philosophy majors scored higher than students in all other majors on standardized tests of verbal and logical reasoning.” The authors add “…now more than ever, students must learn to think clearly and critically. AI promises efficiency, but its algorithms are only as good as the people who steer them and scrutinize their output.”

“Once men turned their thinking over to machines in the hope that this would set them free. But that only permitted other men with machines to enslave them.” ~ Dune (Frank Herbert)

Before we learned to automate our world, we sought to understand it. The rise of cybernetics represents the moment humanity traded the slow, difficult work of contemplation for the immediate, measurable rewards of control.

The Steersman’s Ambition

Cybernetics names a specific ambition. It is the attempt to understand and govern systems through feedback, control, and communication, whether those systems are machines, organisms, societies, or states. The word itself comes from the Greek kubernētēs, the steersman, the one who keeps a vessel on course amid disturbance. Long before computers, it described an art of guidance rather than an act of force. Even here, something human is quietly lost. To steer is already to accept that one’s own interior life can be treated as a system to be kept on course, rather than a space where judgment, doubt, and hesitation might matter.

Wiener vs Heidegger

The modern term was fixed in the 1940s by Norbert Wiener, working with physiologists, engineers, and people such as John von Neumann, who believed that animals, humans, and machines could be understood through the same principles of regulation. This move was not neutral. Cybernetics deliberately set aside intention, meaning, purpose, and interiority. It excluded motive in favor of behavior, ethics in favor of performance, and judgment in favor of correction. Signals replaced reasons. Feedback displaced deliberation. By the time of the Macy Conferences, cybernetics no longer named a discipline. It named a method that treated explanation as secondary to control and understanding as whatever allowed a system to remain stable.

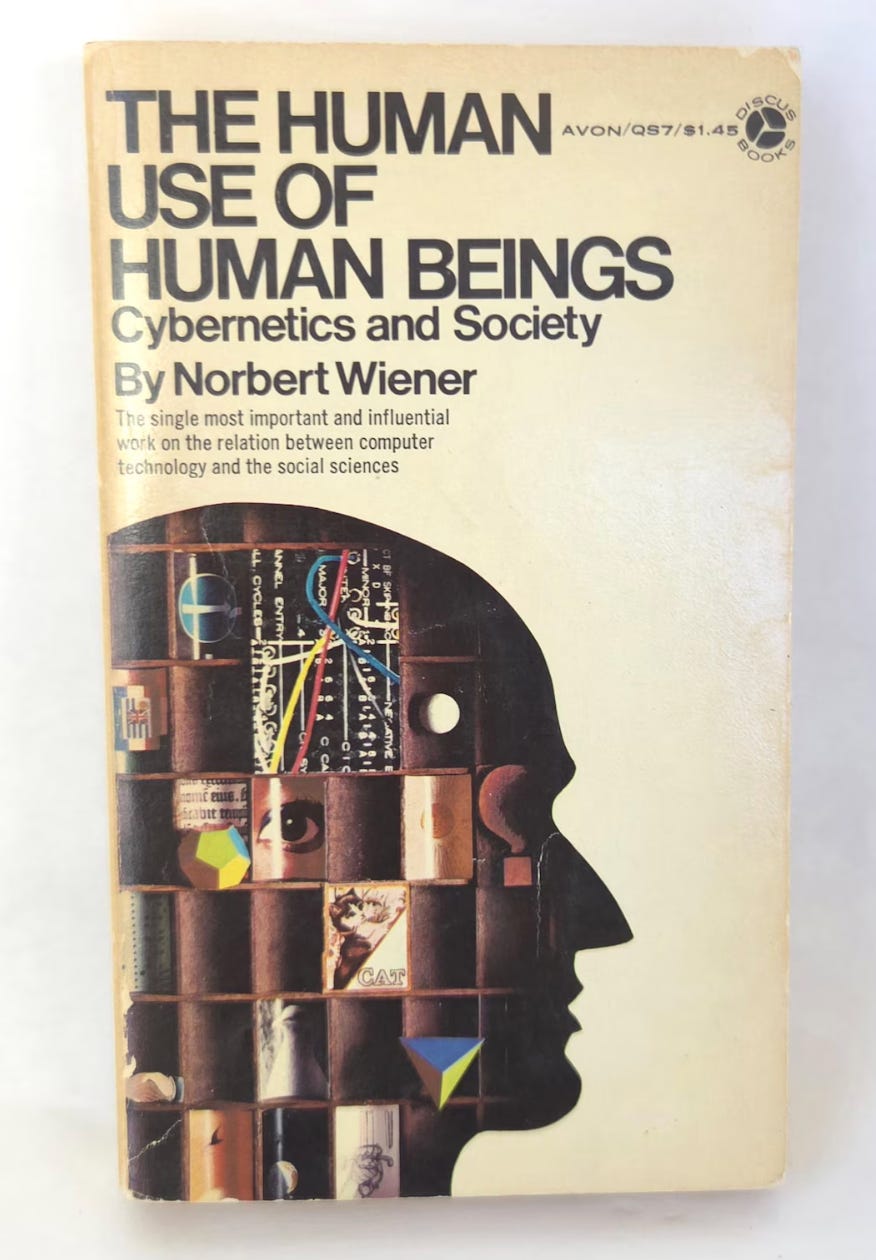

Here the distance between Norbert Wiener and Martin Heidegger becomes absolute. Wiener believed control was a moral advance. He saw feedback as a way to limit domination rather than extend it. In The Human Use of Human Beings, he insists that cybernetics could prevent the inhuman use of humans by replacing brute force with regulation, error-correction, and mutual adjustment. Control, for Wiener, was not tyranny but humility: the admission that no agent, human or machine, could command a complex system without listening to its responses.

Exclusion of Meaning

Heidegger saw the same structure and reached the opposite conclusion. What Wiener called listening, Heidegger called ordering. What Wiener treated as ethical restraint, Heidegger recognized as metaphysical capture. Feedback, from Heidegger’s perspective, does not humanize power. It perfects it by making domination continuous, automatic, and anonymous. There is no tyrant to resist because there is no longer a center of command. Control has become immanent to the system itself.

Wiener trusted that better information would lead to better outcomes. Heidegger denied that premise entirely. For him, the danger was not misuse but reduction. Cybernetics does not merely govern systems; it defines in advance what counts as a system. Anything that cannot be measured, regulated, or fed back disappears from relevance. Meaning does not get violated. It gets excluded.

This is the incompatibility that still haunts contemporary debates about Artificial Intelligence. Wiener believed cybernetics could serve human values if guided properly. Heidegger believed that once cybernetic thinking governs, values themselves are reformatted into variables. What Wiener hoped would limit power, Heidegger saw as its final refinement.

End of Philosophy

This is why Martin Heidegger could later claim that cybernetics marked the end of philosophy. In his 1966 Der Spiegel interview, he states bluntly that what follows philosophy is cybernetics, a science concerned not with truth but with steering, regulation, and control. Elsewhere he describes cybernetics as the moment when thinking gives way entirely to calculation, when beings appear only insofar as they can be ordered, predicted, and managed. I do not read this as drama. I read it as a jurisdictional shift.

For Heidegger, this shift belongs to what he calls Gestell, enframing: the mode of revealing in which everything that is, including human beings, shows up as standing-reserve, as something to be optimized and held ready for use. In The Question Concerning Technology, he writes that this form of revealing “challenges” nature and humanity alike, reducing them to resources within a system of control. Cybernetics, in this sense, is not just another technology. It is the completion of a metaphysical trajectory in which explanation collapses into control and meaning becomes whatever stabilizes the system.

And yet Heidegger does not stop at denunciation. He insists that where the danger is, there also grows what can save. Within enframing, he locates a concealed possibility of poiēsis, a bringing-forth rather than a steering. If cybernetics is the art of constant correction, poiēsis names a different stance altogether: letting beings emerge without forcing them into advance categories of control. It is not optimization, but patience. Not governance, but allowing what is to show itself.

Limits of Calculation

I take this as his wager. If cybernetics can be seen as one historical mode of revealing rather than an inescapable destiny, then thinking might again begin where calculation reaches its limit. Once systems could observe themselves, adjust themselves, and justify themselves by performance alone, the old need to argue about meaning began to look ornamental. I think this is the real inheritance of cybernetics: not machines that think, but decisions that no longer need reasons.

I notice this most clearly in how power now operates. Governance rarely announces itself as command. It presents itself as adjustment. The language is technical, procedural, calm. Inputs change. Outputs stabilize. Decisions arrive already formatted as metrics. In algorithmic hiring systems, in productivity scoring, in creditworthiness models, there is no official to confront and no rule to debate. There is only a screen that reports compliance or deviation, a form of listening stripped of any listener.

It is at this point that I feel the humor. Not because the situation is amusing, but because it is closed. Nothing has gone wrong. The system is doing exactly what it was designed to do. And there is nothing left to oppose except a dashboard. I think we need more precision about something many contemporary debates miss. Cybernetics is not a device, a discipline, or a historical episode. It is a metaphysical settlement. It resolved an argument that had run since Plato by declaring that control is superior to contemplation.

Familiar and Uncanny

I believe this is why modern artificial intelligence feels both familiar and uncanny. It does not introduce a new logic. It intensifies an old one. The early cyberneticians spoke of a human use of human beings. I think that phrase should make us uneasy. It assumes that the central problem was cruelty rather than substitution: the trade of human judgment for systemic output. What we have instead is a regime where judgment migrates into systems and responsibility diffuses into process. No one decides. The system decides that it has decided.

Feedback loops reward continuation. Each correction justifies the next. A system adjusts to excess by tightening itself, then interprets the tightening as proof that it must continue adjusting. Over time, this self-correcting motion becomes self-destructive, not because it breaks, but because it never stops. One more iteration never looks like excess from the inside.

I sense that what Demis Hassabis meant when he said we need more philosophers is an epistemological reconstruction. I see Hassabis’ point as a demand to recover the capacity to ask what kind of beings we are becoming under systems that learn faster than we can judge. This is not a technical question. It is a moral one that no longer sounds moral.

Erasing Accountability

What troubles me most is the ambition to erase the distinction between organism and machine. I believe that ambition has succeeded at the level that matters most: accountability. When systems fail, we investigate pipelines, not purposes. That is not a flaw. It is the worldview functioning correctly.

I am convinced that cybernetics is not our future but our present tense. We explain ourselves through stability, equilibrium, and resilience. Meaning looks inefficient by comparison. What resists this logic is not rebellion but refusal: the decision to pause where systems demand acceleration, to ask what cannot be optimized, and to accept forms of judgment that do not resolve into metrics. The final surrender would not be being controlled by systems, but becoming the systems we once believed we were merely steering.

Stay curious

Colin

Quote from Michel Foucault, "Power, a Magnificent Beast"

"Power doesn't always shout. It almost never strikes head-on. Truly dangerous power whispers, manages, nurtures, normalizes. It disguises itself as science, security, the "common good." It doesn't tell you "obey me": it classifies you, corrects you, diagnoses you. It doesn't punish you for what you do, but for what you could become. When power ceases to resemble power, when it becomes routine, a file, an expert opinion, a protocol, then it becomes a magnificent beast: efficient, elegant, devastating.

Prison doesn't fail. It works. It works when it produces criminals, when it manufactures fear, when it justifies surveillance, when it turns injustice into normality. It works when judicial error is not an anomaly but a technique. When torture is not excess, but reason. When the system needs culprits to keep breathing. The problem isn't that power makes mistakes: it's that it often does exactly what it was designed to do.

That's why criticism doesn't serve to reassure or offer easy solutions. It serves to unsettle. To make suspicious what seemed natural. To break the anesthesia. Because resisting today is not always about seizing power: sometimes it is something more difficult and more urgent —learning to see how it operates, where it hides, who it silently crushes— and refusing, with lucidity and dignity, to continue being governed in that way."

Michel Foucault, "Power, a magnificent beast"

“Cybernetic inheritance” - “not machines that think, but decisions that no longer need reasons.” The loss of due process as “there is no official to confront and no rule to debate. There is only a screen that reports compliance or deviation, a form of listening stripped of any listener.” The loss of reflection and “an argument that had run since Plato by declaring that control is superior to contemplation.” Discipline is demanded, not decided in mutual understanding.

The signal as the stick denying deliberation. Independent action denied, independent thought cancelled. “Explanation as secondary to control.”

Could you say this is “reasoning at the speed of light” in that its arrival comes in a flash so bright it blinds us?