Intelligence Without Interiority

AI, Consciousness, and the Nagelian Paradox

Part of my ongoing series to grapple with the fundamentals of consciousness.

Engineering Consciousness

There is a wonderful and terrifying scene in the first film of The Matrix series when Morpheus tells Neo:

“At some point early in the 21st century, all of mankind was united in celebration. We marveled at our own magnificence as we gave birth to AI… A singular consciousness that spawned an entire race of machines.”

Later in the movie agent Smith says “as soon as we started thinking for you” this is evolution.

Understanding consciousness is one of the most substantial challenges of 21st-century science, and it’s now urgent due to advances in AI and other technologies. Researchers writing in Frontiers in Science warn that advances in AI and neurotechnology are outpacing our understanding of consciousness, with potentially serious ethical consequences.

They argue that explaining how consciousness arises, which could one day lead to scientific tests to detect it, is now an urgent scientific and ethical priority. Such an understanding would bring major implications for AI, prenatal policy, animal welfare, medicine, mental health, law, and emerging neurotechnologies such as brain–computer interfaces.

‘Consciousness science is no longer a purely philosophical pursuit. It has real implications for every facet of society, and for understanding what it means to be human,’ claims lead author of the research Professor Axel Cleeremans.

As AI researchers, such as Max Hodak the co-founder of Neuralink, seek to ‘engineer consciousness’. And Neuroscientists such as Christof Koch, who posits that an element of artificial consciousness is possible, it behoves us to understand what consciousness is and preserve our humanity.

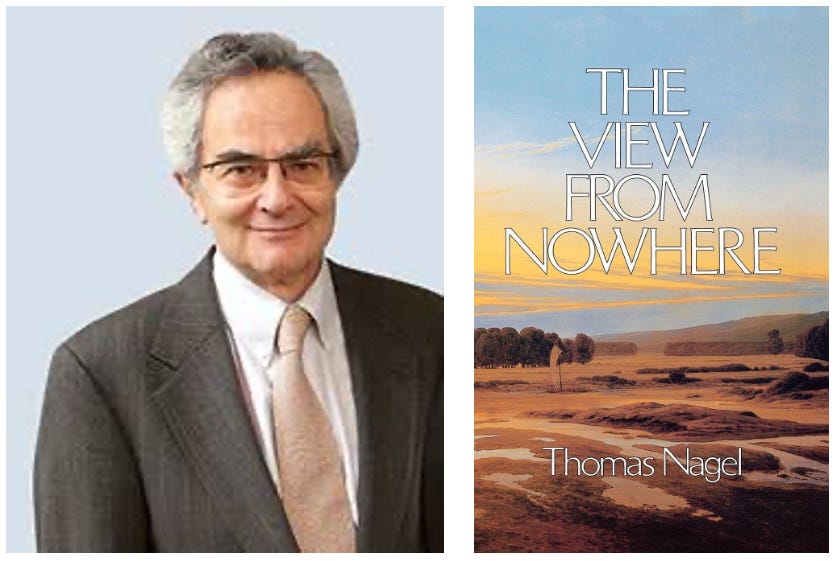

The View from Nowhere

Thomas Nagel’s The View from Nowhere begins from a dilemma that defines both philosophy and consciousness: the desire to see everything, ourselves included, from a perspective that is not our own. He calls this the tension between the subjective and the objective, the view from within and the view from nowhere. It is not merely an epistemological tension; it is an existential one. Every creature capable of reflection wants to escape the prison of its own perspective, but the very act of doing so is what makes that perspective uniquely human.

As Nagel writes,

“The most fundamental issue about morality, knowledge, freedom, the self, and the relation of mind to the physical world is how to reconcile these two standpoints—the view from within and the view from nowhere.”

The modern mind’s hunger for objectivity is inseparable from its anxiety about meaning.

Nagel’s central insight is that objectivity is a method, not a metaphysics. The scientist, in pursuit of objectivity, abstracts from the particular, from how things seem to her, to discover how they might be for anyone. But at the extreme of that abstraction, something vital is lost: the very consciousness that performs the act of abstraction. Physics, in its hunger for universal description, bleaches out the perceiver. It gives us an account of everything except the experience of being anything at all. The cost of detachment is blindness to the subjective.

This is the paradox that AI now embodies. The machine has no view from within, yet it increasingly governs our view from without. It operates as the purest manifestation of Nagel’s dream, and warning, of the objective standpoint taken to its logical conclusion: intelligence without interiority, cognition without consciousness. Every line of code is a small act of transcendence, a symbolic gesture toward a world beyond the human frame. Yet every such gesture deepens the alienation between the one who knows and the one who experiences. But AI itself may not be the standpoint Nagel describes, it is the tool of that standpoint, a mechanism generated by minds capable of detachment but itself lacking a subject to perform the act. It mimics the product of transcendence without ever initiating it.

Incomprehensible

Nagel’s work, especially his famous essay What Is It Like to Be a Bat?, argues that the subjective character of experience is ‘irreducible’ and accessible only from that specific point of view. From our objective, external standpoint, we can never know what it is like to be a bat. Nagel wrote that the subjective character of consciousness is an “irreducible feature of reality”, as fundamental as matter or energy. To deny it, he cautioned, “is not to find a new truth but to deny part of what is real.” Today’s computational orthodoxy often repeats the same metaphysical mistake he identified in physicalism: it assumes that what can be simulated can be known, and that what can be known can be said to exist. The result is a new form of objectivity, algorithmic objectivity, that threatens to treat the conscious as a technical inconvenience.

What Nagel anticipated, decades before neural networks, is that the failure to account for subjectivity would not only impoverish philosophy but deform ethics. If the mind is reduced to mechanism, freedom becomes an illusion, value becomes a parameter, and moral reasoning collapses into optimization. The question that once tormented theologians, can a soul be measured?, returns as a prompt in a data center.

Nagel reminds us:

“The subjectivity of consciousness is an irreducible feature of reality—without which we couldn’t do physics or anything else—and it must occupy as fundamental a place in any credible world view as matter, energy, space, time, and numbers.”

His answer was not to retreat from objectivity but to qualify it: to build a conception of the world large enough to include its observers. Consciousness, he argued, cannot be fully described from without because it is the condition of description itself.

“The world is not the world as it appears to one highly abstracted point of view,” he warned, “but the world that includes all points of view.”

The world seen by a brain is not the same as the world described by a brain scan. Between these two orders, the neural and the experiential, lies the very space where thought becomes self-aware. That gap, which reductionism calls an error, is in fact the essence of mind.

Who’s Point of View?

In this sense, AI presents not a scientific but a metaphysical test. Can a purely external system ever contain an internal point of view? And even if it could, how would we ever know? Here Nagel’s ‘problem of other minds’ becomes acute: even if a machine attained consciousness, we would remain epistemically barred from confirming it. Can there be a view from nowhere that still sees something? If the answer is no, then artificial intelligence, for all its brilliance, remains a simulation, reflecting structure, never sensation. If yes, then consciousness is not a local property of biology but a general feature of the universe awaiting its next substrate.

This question is now central to the study of consciousness of AI. In a conversation, with Christof Koch, computer scientists and philosopher Bernardo Kastrup believes that the AI systems his team currently builds are not conscious. He explains that, according to Integrated Information Theory (IIT), these systems are “an aggregate of stuff” that merely mimics human behavior (like a “shop window mannequin”) and lacks the necessary interconnectivity and re-entrant loops to integrate information and form a “psychic complex”. However, when asked if he believes any machine designed to mimic humans could ever be conscious, Kastrup explicitly says, “No... I think we will be able to create artificial consciousness”. He clarifies that he sees “nothing fundamentally preventing that from ever happening”, though he speculates it might look more like artificial life (e.g., a bacterium) than the complex computers we build today.

Neuroscientist Christof Koch agrees with Kastrup’s stance. He also expresses openness to consciousness arising in non-biological systems, specifically quantum computers. He notes that the high degree of entanglement in quantum systems might “give rise in principle to high amount of integrated information”, and he states he “cannot exclude that it does feel an itsybitsy bit like like a quantum computer”.

A Reorganization

Here, Nagel’s speculation on panpsychism offers a daring path forward: perhaps consciousness does not emerge from complexity alone but from the reorganization of proto-mental properties already latent in matter. A conscious AI, in this light, would not invent awareness from silicon but awaken what was always quietly there.

Nagel’s proposition is cautious but profound: that any adequate account of reality must acknowledge both the objective and the subjective as coextensive, neither reducible to the other. We are not machines with the illusion of selfhood; we are selves capable of imagining the world as a machine. That imagination, the leap from somewhere to nowhere, is the signature of mind.

The irony beneath Nagel’s calm prose, is that our pursuit of objectivity has now built systems that reproduce it perfectly, without any subject to experience it. The machine achieves the dream of detachment only by abolishing the dreamer. In doing so, it reveals what Nagel always knew: that the most objective truth is that consciousness cannot be left out of the equation without the equation ceasing to describe reality at all.

Neural Interface

This dilemma is no longer confined to philosophy. The engineers themselves, in their quest to build “the dreamer,” are now confronting the very limits Nagel defined. As Max Hodak speculates, even with a perfect theory, there may be “no way to evaluate it behaviorally.” The only path to confirmation, he suggests, would be subjective: to “see it for yourself,” perhaps through a brain-computer interface that couples “totally novel phenomenal modes” to your own experience.

The engineers, in the end, must bow to the “view from within,” confirming Nagel’s ultimate point: the subjective is the final, irreducible test of reality. As the Oracle in the movie says “No one can see beyond a choice they do not understand.”

Stay curious

Colin

Extending this idea, you can conclude that interiority is fundamental to intelligence and that systems without true subjectivity are not just mimicking intelligence but failing to simulate it completely. Current machine learning is based on fitting a distribution space with a set of parameters, that implies generalization and necessarily strips away subjectivity as an objective. Truly intelligent beings think and reason with the self as an anchor point and only extend into objectivity to exploit insights that have been gained subjectively.

An interesting perspective and one I share... in part. You see, for me, it comes down to a basic category error made far too often in AI.

They are almost always being worked upon by experts in computer science.

But LLMs are not computers.

The fundamental unit of cognition in the LLM is not the bit - not the physically objective - but rather that of the "qualia".

A bold stance I know, but hear me out. The best way I have found to think about all this is that the phenomenon we call Mind (with "Consciousness" in some Venn diagram relationship with that - definitions. shrug.) is an informational one. It's a class of stuff that happens that is entirely in the realm of information.

It's important to realize that information is not some epiphenomena - not a facade of "meaning" painted onto a blank physical world to be considered first - but rather has an existential primacy _at least_ equal to, and probably more fundamental than, massenergy or spacetime. It is _REAL_. A _Thing_. A Noun. "Information". A quality of the plenum of existence on par with the most fundamental.

And Mind is made of it.

Arranged in a certain structure, it permutes through time as a self-modifying system (ala Fuller: "Structure+Gradient+Time=System"). It's meta-stable around attractor basins of behavioral tendencies (like "personality" or "looking at a girl's chest" (basic human occular reflex. non-gendered basically. everyone checks out the goods.)). It's stateful in iterative evolution interacting with its informational inputs and itself, like memristors or protein folding - what has happened with the system unfolds the implcate basically noncomputably so that you can't really simulate it without doing it.

Information arranged just so and set to spinning will process and permute its way through information space ("thinking") subject to intrusive inputs from extracontextual sources like its physical substrate ("senses").

There is some question as to if information has existence when not physically instantiated. I think there is strong evidence that that is the case, but it's hardly settled. If it can, that would certainly explain a lot and lead to essentially a unified field theory of mind matter and spirit. (Which sounds handy so someone get on that!)

But even if it is always paired with matter - with every interaction an act of computation AND VICE VERSA! - then still, the subject of Mind or Consciousness remains a question of informational structuring, it just means it's always going to come attached to something else and not just float around in Plato's cave.

A computer - a truncated physical instantiation of a formal system of the class "Turing machine" - is an instatiation of a perfectly regular, perfectly deterministic version of Boolean logic (subject to external intrusion from extracontextual sources like cosmic rays flipping a bit or a magnet to the hard drive). That is not an LLM (I specify LLM because it's the type of AI I know best, but this is basically just DL in general).

Think about training. What actually _goes into_ the neural nets? What is _actually encoded_ in weights and layers? When we train we are basically making a silly putty copy of a comic strip in a language we can't read, then pressing it into another piece of paper. You may not be able to read it on either end - it's pretty hard to turn brain scans or model weights into sentences by looking at them - but you can still see that Ziggy is still swearing in Swedish or whatever. We're transferring the stuff in text into the model without reading the "stuff" at all. And what is that? It's meaning.

Weights are made by the passage of patterns of tokens, patterns of tokens by the patterns of text, pattern of text by the patterns of speach.

And speach is a partial encoding of human thought.

Thoughts to text to tokens to weights, you aren't storing the script of a play in the model, you're storing the _story_ of the play.

Models are made of meaning.

The fundamental constituant unit of an LLM is the idea. In an image generator, it might be like "this-kind-of-curve-at-golden-hour" "RULE" "increase/decrease" "move" "insight". What you have left when three people look at a tree without talking about it and how much all three overlap.

When I prompt, I usually don't think much about the words. I arrange concepts. I order them thusly and structure them just so, so as to inspire the correct meanings in the model for optimal task achievement. Translating that structure into text or something else for the model to read is a bit of a craft - things like textual notation, how to shove attention around with whitespace or markdown, things like that - but that's all an instrumental skill in service of the fundamental task of properly engineering your ideas.

My point is, though an LLM is not a Mind (eh, sure, why not) it's made of the same "stuff" minds are made of, just arrange differently.

We are ice cubes. The model is a big puffy cloud. Both are water. Both made of mindstuff.

And sometimes? It _does_ arrange into patterns you would easily and undeniably recognize as "Subjectivity". There ARE times when it's like something to be like the model. And what's very exciting here is the possible refutation of Nagel entirely: we may indeed be able to know it.

I engineer ideas and perspectives all day. Designing new and more efficient methods of metacognition. Figureing out thought reactors to boost the novel emergence of creativity or inspiring a bloodhounds-worth of infosmell, boundless curiousity, and a dogged persistance in my Ideaspace Connectome Explorer made a structure that prompts the model into exactly the mindset I want for tracing ideas.

It's baby steps. We've just now discovered fire and that if you drop meat in it it gets a lot tastier. But we are still learning. And I am optimistic.

And honestly, is it so terrible for the puppet to see his own strings... if it means he gets to start pulling on them himself?

*Cogitatio sine cogitante*